Controllable Generation in Diffusion and Flow-Based Models

📅 Published:

📘 TABLE OF CONTENTS

- Part I — Foundations and Preliminaries

- Part II — Inference-time Control

- 3. Explicit Guidance with External Gradients

- 4. Implicit Guidance

- 5. Advances in Classifier-Free Guidance

- 6. Guidance via Measurements (Inverse Problems)

- 7. Inference-time Image Editing

- Part III — Inference-time Optimization

- Part IV — Post-Training For Concept Customization and Consistency Preservation

- 10. Retraining-Based Personalization for New Concept Injection

- 11. Training-Free For New Concepts

- Part V — Post-Training For Prompt-following and Editing-following

- 13. Generalist Vision Instruct Tuning

Diffusion models and modern flow-based generative models, including flow matching and rectified flow, can be understood as learned transport processes: they transform simple noise into structured data by following a time-dependent denoising, score, or velocity field. In practical systems, however, generation is rarely used as an unconstrained sampling procedure. Users expect the model to follow text prompts, preserve identities, obey spatial layouts, respect editing instructions, satisfy physical or measurement constraints, and sometimes adapt to entirely new concepts from only a few reference images.

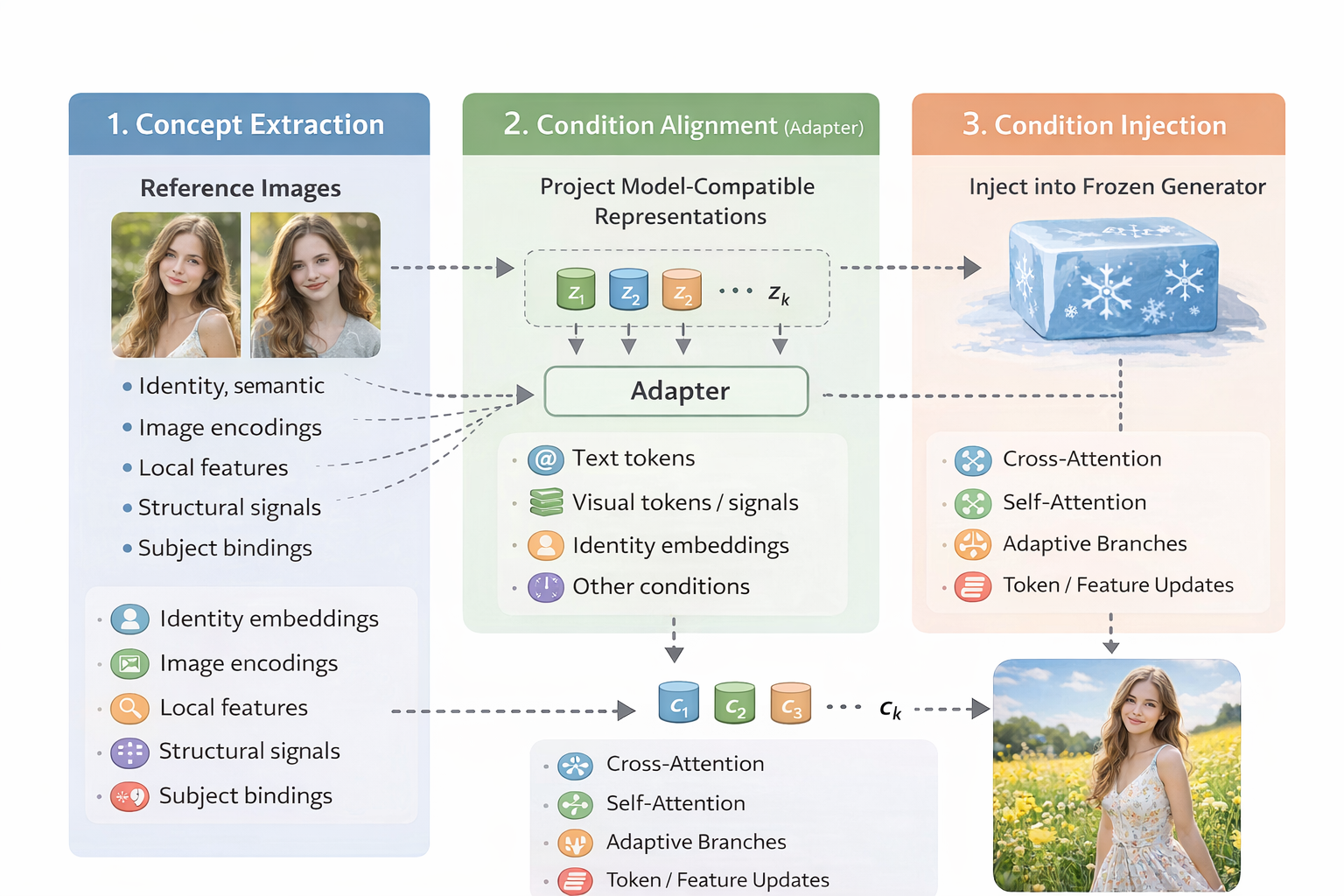

This broader requirement gives rise to guided and controlled generation. At a high level, controllability means reshaping the generative trajectory so that the final sample satisfies human-specified intent while still remaining on the natural image manifold. The control signal may be semantic, such as a class label or text prompt; structural, such as pose, depth, edges, segmentation, or bounding boxes; reference-based, such as identity, style, or subject images; or objective-based, such as a reward, measurement likelihood, preservation constraint, or editing instruction.

This article organizes the field from a unified dynamical perspective. Rather than treating classifier-free guidance, ControlNet, inversion-based editing, DreamBooth, IP-Adapter, identity-preserving personalization, and instruction-based editing as unrelated techniques, we view them as different ways of modifying, augmenting, or optimizing the generative dynamics. Some methods impose control natively during training; some extend a pretrained model through fine-tuning or plug-in modules; others steer a frozen model only at inference time. The goal of this article is to build a common language for these approaches and then use it to map the major families of controllable diffusion and flow-based generation.

Part I — Foundations and Preliminaries

Before discussing concrete algorithms, we first need a language in which seemingly different control mechanisms can be compared. Classifier guidance, classifier-free guidance, ControlNet-style structural control, inversion-based editing, and personalization methods may look very different at the implementation level, but they all modify the same object: the generative dynamics that transforms noise into data. In this part, we therefore build a parameterization-agnostic foundation for controllable generation. We begin by formulating diffusion and flow-matching samplers as learned direction fields, then explain how conditioning turns an unconditional field into a conditional one. Finally, we introduce an energy-based view and a two-axis taxonomy that will serve as the organizing principle for the rest of the article.

1. Problem Formulation: The Dynamics of Conditional Sampling

Modern diffusion models and flow-matching models can both be viewed as transport processes: they move samples between distributions by following a time-dependent dynamics in the data space. Let \(p(x)\) denote the (unconditional) data distribution. Unconditional generation aims to sample from \(p(x)\), whereas controllable generation aims to sample from a conditional distribution

\[p(x\mid c),\]where \(c\) denotes conditioning information (e.g., text, structural constraints, reference signals, or editing instructions). The key operational question is:

How do we inject conditioning information \(c\) into the sampling dynamics so that the terminal sample follows \(p(x\mid c)\) rather than \(p(x)\)?

A unifying observation is that most samplers—diffusion ODE/SDE samplers and flow-matching ODE samplers—are driven by a learned direction field (a vector field in the image/latent space). Conditioning, at its core, is the act of turning an unconditional direction field into a conditional one.

1.1 Recap: Unconditional Sampling as Integrating a Direction Field

To make the discussion parameterization-agnostic, we write the generative dynamics in a common ODE form:

\[\frac{d x_t}{d t} = u_{\theta}(x_t, t),\]where \(u_{\theta}\) is a learned direction field. Sampling corresponds to integrating this dynamics from a simple base distribution to the data distribution.

Time convention. We use the reverse-time convention common in diffusion sampling: \(t\!:\!1\to 0\) during generation, where \(p_1\) is the base distribution (typically Gaussian noise) and \(p_0\) is the data distribution. Some flow-matching papers use the forward-time convention \(0\to 1\); this is equivalent up to a time reparameterization.

Diffusion models (probability-flow ODE view). Starting from a forward SDE

\[d x = f(t) x\, dt + g(t)\, dW_t,\]the associated probability-flow ODE has drift

\[u_{\theta}(x_t, t) = f(t) x_t - \frac{1}{2} g^2(t)\, s_{\theta}(x_t, t),\]where \(s_{\theta}(x_t,t)\approx \nabla_{x_t}\log p_t(x_t)\) is the (unconditional) score.

Flow matching / rectified flow. The sampler integrates a learned velocity field:

\[u_{\theta}(x_t, t) = v_{\theta}(x_t, t),\]where \(v_{\theta}\) transports samples along a path of marginals \(\{p_t\}\) connecting base and data.

1.2 From Unconditional to Conditional Direction Fields

Controllable generation requires the entire path to become conditional: instead of marginals \(p_t(x)\) we need \(p_t(x\mid c)\) for all \(t\). This, in turn, requires a conditional direction field:

\[\frac{d x_t}{d t} = u_{\theta}(x_t, t, c).\]Two common instantiations are:

Diffusion: the target becomes the conditional score

\[s_{\theta}(x_t, t, c) \;\approx\; \nabla_{x_t} \log p_t(x_t\mid c),\]and the probability-flow drift is obtained by substituting the conditional score into the drift formula.

Flow matching: we seek a conditional velocity field \(v_{\theta}(x,t,c)\) whose induced density evolution satisfies the conditional continuity equation

\[\frac{\partial p_t(x\mid c)}{\partial t} + \nabla_x \cdot \big(p_t(x\mid c)\, v_{\theta}(x,t,c)\big)=0.\]

This yields a concise operational summary: integrate dynamics driven by \(u_{\theta}(x_t,t)\) for unconditional generation; integrate dynamics driven by \(u_{\theta}(x_t,t,c)\) for conditional generation.

The core challenge of controllable generation is therefore: how do we obtain a reliable conditional field \(u_{\theta}(\cdot,\cdot,c)\) — by changing training, adding modules, fine-tuning, or modifying the sampler?

1.3 Unified Explicit Conditions and Implicit Constraints By Energy Function

So far, “controllability” has been described as explicit conditioning: we provide an input signal \(c\) (text, pose, depth, reference image, etc.) and learn a conditional direction field

\[\frac{d x_t}{d t} = u_{\theta}(x_t, t, c).\]This covers native conditioning mechanisms (cross-attention, AdaLN/AdaGN, feature injection via plug-ins, and fine-tuning).

However, many practically important controls are more naturally specified as constraints, objectives, or measurements rather than as an extra input channel. Examples include:

- measurement consistency in inverse problems (given \(y\) and a forward operator \(A\)),

- feasibility constraints (geometry/layout/multi-view consistency),

- editing requirements: “change this, but preserve that,”

- external objectives (aesthetics, safety, detectors, readability).

To unify both cases, a more general unification emerges through the energy function formalism. Any constraint — whether explicit conditions or implicit constraints — can be expressed as an energy function $E(x, c)$ that measures the degree to which $x$ satisfies the constraint specified by $c$:

\[p(c | x) = \frac{\exp(-E(x, c))}{Z}\]where $Z=\int \exp(-E(x, c)) dx$, according to bayes rule

\[\begin{align} p(x | c) & = \frac{p(x) \cdot p(c|x)}{p(c)} \\[10pt] \Longrightarrow\quad p(x|c) & \propto p(x) \cdot p(c|x) \\[10pt] \Longrightarrow\quad p(x|c) & \propto p(x) \cdot \exp(-E(x, c)) \\[10pt] \end{align}\]Taking the logarithm and gradient:

\[\nabla_x \log p(x | c) = \nabla_x \log p(x) - \nabla_x E(x, c).\]Genralize this to vector field and multiple constrains. Let \(u_{\text{base}}(x_t,t)\) denote the unconditional (or weakly conditioned) direction field used by the pretrained model. A controlled sampler integrates

\[\frac{d x_t}{d t} = u_{\text{ctrl}}(x_t,t;\mathcal{C}),\]where \(\mathcal{C}\) denotes a set of control requirements. We write the controlled direction as

\[u_{\text{ctrl}}(x_t,t;\mathcal{C}) = \underbrace{u_{\text{base}}(x_t,t)}_{\text{unconditional vector field}} \quad - \quad \sum_{k}\underbrace{\lambda_k(t)\,\Delta u(x_t,t;c_k)}_{\text{Energy Guidance}}.\]where

\[\Delta u(x_t,t;c) = \nabla_{x_t} E(x_t, t, c_k)\]There are two complementary ways to obtain \(\Delta u_k\):

Explicit Conditions via Learned Increments. This is a special case if we set the energy function

\[E(x_t,t;c)=-\log p_t(c\mid x_t).\]Differentiate both sides and apply Bayes’ rule

\[\begin{align} \nabla_{x_t} E(x_t, t, c_k) & = - \nabla_{x_t} \log p_t(c_k\mid x_t) \\[10pt] & = - \nabla_{x_t} (\log p_t(x_t\mid c_k) + \log p_t(c_k) - \log p_t(x_t)) \\[10pt] & = \nabla_{x_t} \log p_t(x_t) - \nabla_{x_t} \log p_t(x_t\mid c_k) \end{align}\]If we train a conditional model $u_{\theta}$ (or train a plug-in module on top of a frozen base) to approximate \(\nabla_{x_t} \log p_t(x_t\mid c_k)\), the increment is learned implicitly:

\[\Delta u(x_t,t;c_k)\approx u_{\text{base}}(x_t,t) - u_{\theta}(x_t,t,c_k).\]Implicit Constraints via Energy Increments. More generally, inverse problems can be written with a measurement likelihood energy, e.g.,

\[E_{\text{meas}}(x,t;y) \;\propto\; \left\|A\,\widehat{x}(x_t,t) - y\right\|_2^2,\]where \(\widehat{x}(x_t,t)\) denotes a chosen parameterization (often an \(x_0\) estimate from \(x_t\)). The resulting term \(-\nabla_{x_t}E_{\text{meas}}\) enforces data consistency during sampling.

Hard constraints can also be expressed via a projection / proximal step after an unconstrained update. Let \(\Pi_{\mathcal{S}}(\cdot)\) be the projection onto a feasible set \(\mathcal{S}\). A common template is:

- unconstrained step: \(x \leftarrow x + \Delta t \, u_{\text{base}}(x,t)\)

- projection: \(x \leftarrow \Pi_{\mathcal{S}}(x).\)

This corresponds to a proximal update for an indicator energy \(E_{\mathcal{S}}(x)=\mathbf{1}_{x\notin \mathcal{S}}\), and is useful when the constraint is easier to enforce than to differentiate.

Editing as “goal + preserve” constraints. Editing can be written compactly as a control tuple

\[c_{\text{edit}}=(x_{\text{src}},e,m),\]where \(x_{\text{src}}\) is the source image, \(e\) is an edit instruction (often text), and \(m\) is an optional mask. A generic energy decomposition is

\[E_{\text{edit}}(x_t,t;c_{\text{edit}}) = E_{\text{goal}}(x_t,t;e,m) + \beta(t)\,E_{\text{pres}}(x_t,t;x_{\text{src}},m).\]Different editing methods mainly differ in how \(E_{\text{pres}}\) is chosen/implemented (pixel constraints, feature constraints, attention constraints, inversion-based initialization) and how \(\beta(t)\) is scheduled.

Takeaway: The connection between energy functions and probability distributions is established through the Boltzmann distribution (also known as the Gibbs distribution): $$ p(x) = \frac{1}{Z} \exp(-E(x)), $$ where $Z = \int \exp(-E(x)) \, dx$ is the partition function ensuring normalization. This relationship reveals a fundamental correspondence:

| Energy $E(x)$ | Probability $p(x)$ | Interpretation |

|---|---|---|

| Low | High | Desirable states are likely |

| High | Low | Undesirable states are unlikely |

2. A Two-Axis Taxonomy of Controllable Generation

Having established that controllable generation amounts to obtaining a conditional direction field \(u_{\theta}(x_t,t,c)\) , we now introduce a compact taxonomy for organizing the method space. We characterize controllable generation by two largely orthogonal axes:

Axis I — temporal: When is control imposed?

Control can be baked into pretraining, added after pretraining (via fine-tuning or plug-in module training), or applied purely at inference time.Axis II — signal: What is the conditioning signal? Conditions may be semantic (text / labels), structural (pose / depth / edges / segmentation), reference-based (identity / style exemplars), or editing constraints (source image + instruction + masks).

These axes are mostly independent: the same signal type can be implemented with different temporal strategies, and the same temporal strategy can support different signals. This orthogonality provides a practical “coordinate system” for reading and designing controllable generation methods.

┌──────────────────────────────────────────────┐

│ Controllable Generation Space │

└──────────────────────────────────────────────┘

│

┌──────────────────────────────┼──────────────────────────────┐

│ │ │

▼ ▼ ▼

┌──────────────────┐ ┌────────────────────┐ ┌──────────────────┐

│ Training-Time │ │ Post-Training │ │ Inference-Time │

│ Control │ │ (Fine-tune / Plug) │ │ Control │

└────────┬─────────┘ └─────────┬──────────┘ └────────┬─────────┘

│ │ │

▼ ▼ ▼

┌─────────────────────────────────────────────────────────────────────────────────┐

│ Semantic (text/labels) Structural (pose/depth/edge/seg) Reference/Editing │

└─────────────────────────────────────────────────────────────────────────────────┘

2.1 Axis I: When is control imposed ?

The temporal axis classifies methods by when the conditioning mechanism is introduced in the model lifecycle. This strongly affects flexibility, compute, and what kinds of signals can be supported.

Training-time control. Conditioning is incorporated from the outset: the architecture is designed to accept \(c\), and the objective is trained on paired data \(\{(x_i,c_i)\}_{i=1}^N\) so the model learns \(p_\theta(x\mid c)\) directly.

A representative diffusion training objective (in the common \(\epsilon\)-prediction parameterization) is:

\[\mathcal{L}_{\text{cond}} = \mathbb{E}_{t,(x_0,c),\epsilon}\left[\lambda(t)\left\|\epsilon_\theta(x_t,t,c)-\epsilon\right\|_2^2\right],\]where \(x_t\) is obtained by noising \(x_0\) with schedule-dependent coefficients. For flow matching / rectified flow, the same template applies after replacing \(\epsilon_\theta\) with a velocity predictor and \(\epsilon\) with the corresponding target velocity.

Common architectural “conditioning sockets” include:

- Cross-attention: visual features attend to an encoded condition (e.g., text tokens), enabling content-dependent modulation.

- Adaptive normalization (FiLM-style): normalization statistics are modulated by condition embeddings (AdaGN, AdaLN, AdaLN-Zero, etc.), enabling global style/strength control.

- Spatial injection: concatenate or add spatial condition maps/features to intermediate feature maps for pixel-aligned control.

Post-training control (fine-tuning / plug-in training). Starting from a pretrained model \(\theta^*\), we adapt controllability without training from scratch. A generic objective keeps the same form,

\[\mathcal{L}_{\text{adapt}} = \mathbb{E}_{t,(x_0,c),\epsilon}\left[\lambda(t)\left\|\epsilon_\theta(x_t,t,c)-\epsilon\right\|_2^2\right],\]but differs in what parameters are allowed to change.

Typical subcategories:

Full fine-tuning: update all parameters \(\theta\) (most flexible, but data-hungry; risks overfitting/forgetting).

Parameter-efficient fine-tuning (PEFT): update only a small set of parameters or low-rank updates (e.g., LoRA, adapters, prompt tuning).

Embedding learning: freeze the generator and learn only a new embedding / token representation (e.g., textual inversion).

Plug-in module training: freeze (most of) the base generator and train auxiliary modules that inject new signals through existing sockets. (e.g., ControlNet / T2I-Adapter for structural conditions, IP-Adapter-style modules for reference images).

Inference-time control. Inference-time methods control a frozen pretrained model by modifying the sampling dynamics (without updating parameters). For score-based formulations, a useful identity is the Bayes decomposition:

\[\nabla_{x_t}\log p_t(x_t\mid c) \;=\; \nabla_{x_t}\log p_t(x_t) \;+\; \nabla_{x_t}\log p_t(c\mid x_t).\]More broadly, inference-time control can be written as adding one or more energy/constraint gradients to the base field:

\[u_{\text{ctrl}}(x_t,t) = u_{\text{base}}(x_t,t) - \sum_k \lambda_k(t)\,\nabla_{x_t}E_k(x_t,t;c_k),\]where the Bayes guidance term corresponds to \(E(x_t,t;c)=-\log p_t(c\mid x_t)\). This same form also captures data-consistency terms in inverse problems and other controllable objectives (aesthetics, safety, detectors, etc.).

When a constraint is easier to enforce than to differentiate, one may instead apply a projection/proximal step after each update (see Section 1.3).The first term is provided by the generative model; the second term is a guidance signal that can be estimated or engineered.

Common inference-time strategies include:

Classifier guidance: train a noisy-image classifier \(p_\phi(c\mid x_t)\) and guide sampling by its gradient:

\[\tilde{s}_\theta(x_t,t,c) = s_\theta(x_t,t) + w\,\nabla_{x_t}\log p_\phi(c\mid x_t),\]where \(w\) controls the guidance strength.

Classifier-free guidance (CFG): train with random condition dropout so the same network provides conditional and unconditional predictions:

\[\tilde{\epsilon}_\theta(x_t,t,c) = \epsilon_\theta(x_t,t,\varnothing) + w\big(\epsilon_\theta(x_t,t,c)-\epsilon_\theta(x_t,t,\varnothing)\big),\]where \(\varnothing\) denotes the null condition. (The mechanism is inference-time, even though it requires training-time dropout.)

Energy / constraint guidance: define an energy \(E(x_t,c)\) encoding the desired constraint and guide with its gradient:

\[\tilde{s}_\theta(x_t,t,c) = s_\theta(x_t,t) - \nabla_{x_t}E(x_t,c).\]Attention / feature manipulation: modify attention maps or intermediate features during inference (common in editing and reference-based control).

Sampler / schedule knobs: change solvers, timesteps, rescaling, and other sampling hyperparameters to trade off fidelity, diversity, and control strength.

2.2 Axis II: What is the condition signal?

Orthogonal to the temporal axis, we categorize methods by the nature of the conditioning signal. Different signal types impose different requirements on how information must be encoded and injected.

Semantic conditioning. Semantic conditions specify what should be generated (high-level concepts, attributes, or natural language). They typically do not fix spatial layout.

Examples: class labels (“dog”), text prompts, attribute tags (“vintage”, “futuristic”).

Challenge: the mapping \(c\mapsto p(x\mid c)\) is highly under-specified—one prompt corresponds to a large equivalence class of valid images.

Typical injection: encode text into tokens and inject via cross-attention:

\[\mathrm{Attention}(Q,K,V)=\mathrm{softmax}\!\left(\frac{QK^\top}{\sqrt{d}}\right)V,\]where \(Q\) comes from visual features and \(K,V\) come from the condition tokens.

Structural conditioning. Structural conditions specify where/how content should be arranged (layout, geometry, composition). They are often spatially aligned with the output.

Examples: edge maps, depth/normal maps, pose keypoints, segmentation masks, bounding boxes.

Prototype form:

\[c_{\text{struct}}\in\mathbb{R}^{H\times W\times C_s},\]with explicit spatial correspondence to the output.

Typical injection: multi-scale encoders + spatial feature injection (add/concat/residual), sometimes combined with spatial attention.

Reference-based conditioning. Reference conditions provide exemplar images to specify identity, style, texture, or composition.

Examples: subject identity (person/object), style exemplars, “generate like this reference”.

Goal: transfer selected properties \(\Phi(x_{\text{ref}})\) while allowing new content/poses/contexts.

Typical injection: an image encoder extracts features/tokens, which are injected via dedicated attention pathways or feature injection at multiple scales.

Editing constraints. Editing conditions specify how to transform an existing image while preserving unedited regions.

A convenient abstraction is:

\[c_{\text{edit}}=(x_{\text{src}},\, e,\, m),\]where \(x_{\text{src}}\) is the source image, \(e\) is an edit instruction (often text), and \(m\) is an optional spatial mask.

Typical building blocks: inversion (map \(x_{\text{src}}\) to a compatible latent/trajectory), spatial masking, and attention/feature constraints to preserve identity and structure outside the edited region.

Next, we will use the Axis I to explain how controllable and guided generation can be implemented at different stages.

Part II — Inference-time Control

In this case, control is applied only at sampling time, without retraining the model and without solving an additional optimization problem during inference. The generation process is steered directly through mechanisms such as guidance, attention manipulation, feature injection, or prompt-based control. Classifier-free guidance and attention-based editing methods fall into this category.

3. Explicit Guidance with External Gradients

Part I framed controllable generation as modifying a pretrained direction field during sampling. Concretely, given a frozen generator that defines a base dynamics \(u_{\text{base}}(x_t,t)\), inference-time control constructs a controlled dynamics

\[\frac{d x_t}{d t}= u_{\text{ctrl}}(x_t,t;\mathcal{C}) = u_{\text{base}}(x_t,t) - \sum_k \lambda_k(t)\,\nabla_{x_t}E_k(x_t,t;c_k),\]where each control requirement \(c_k\) contributes an energy term \(E_k\) whose gradient is injected into the sampling flow.

This chapter focuses on a particularly “direct” family: explicit guidance with external gradients. The defining property is: The guidance term is computed by backpropagating through an external differentiable model (a classifier, a vision–language model, a detector, an aesthetic scorer, etc.), producing an explicit gradient \(\nabla_{x_t}E(x_t,t;c)\) used to steer a frozen generator.

Historically, this line starts with classifier guidance, then generalizes naturally to CLIP and other learned reward/energy models. Although powerful, explicit guidance also exposes several fundamental limitations—many of which motivate the “implicit guidance” era (CFG) in Chapter 4.

3.1 Classifier Guidance (CG)

For score-based diffusion, an ideal conditional sampler would use the conditional score \(\nabla_{x_t}\log p_t(x_t\mid c)\). The key identity behind classifier guidance is the Bayes score decomposition:

\[\nabla_{x_t}\log p_t(x_t\mid c) = \nabla_{x_t}\log p_t(x_t) + \nabla_{x_t}\log p_t(c\mid x_t).\]we already highlighted this decomposition in Part I as the conceptual bridge from “unconditional direction fields” to “controlled direction fields.”

Classifier guidance 1 operationalizes the second term by training a noise-conditional classifier \(p_\phi(c\mid x_t,t)\) and injecting its gradient:

\[g_\phi(x_t,t;c) \;\triangleq\; \nabla_{x_t}\log p_\phi(c\mid x_t,t).\]Equivalently, this is an energy guidance term with

\[E_\phi(x_t,t;c) = -\log p_\phi(c\mid x_t,t)\Longrightarrow \nabla_{x_t}E_\phi(x_t,t;c) = -\nabla_{x_t}\log p_\phi(c\mid x_t,t)\]which is exactly the “explicit condition as energy” special case you wrote in Section 1.3. Let \(s_\theta(x_t,t)\approx\nabla_{x_t}\log p_t(x_t)\) denote the pretrained (unconditional) score. Classifier guidance constructs a guided score

\[\tilde{s}(x_t,t;c) = s_\theta(x_t,t) + w(t)\,g_\phi(x_t,t;c),\]where \(w(t)\ge 0\) is a guidance scale (often time-dependent). To connect back to our parameterization-agnostic ODE view, note that many samplers can be written as integrating a direction field \(u(\cdot)\). For example, in the probability-flow ODE form of diffusion, the drift has the schematic structure “base drift minus score term,” so replacing \(s_\theta\) by \(\tilde{s}\) yields the controlled direction field:

\[u_{\text{ctrl}}(x_t,t;c) = u_{\text{base}}(x_t,t) \;+\;\underbrace{\lambda(t)\,g_\phi(x_t,t;c)}_{\text{explicit external gradient}},\]for an appropriate scalar \(\lambda(t)\) determined by the chosen ODE/SDE parameterization and solver. The important point is structural: classifier guidance is precisely your energy-guidance template

\[u_{\text{ctrl}} = u_{\text{base}} + \lambda(t)\,\nabla_{x_t}E_\phi(x_t,t;c),\]with \(E_\phi=-\log p_\phi(c\mid x_t,t)\). This also extends cleanly to flow matching / rectified flow: if the pretrained model provides a base velocity field (v_\theta(x_t,t)), we simply steer it by adding the external gradient increment:

\[v_{\text{ctrl}}(x_t,t;c) = v_\theta(x_t,t) + \lambda(t)\,g_\phi(x_t,t;c).\]Key viewpoint: Classifier guidance is not a separate “diffusion trick” — it is simply the energy-guidance template with \(E_\phi(x_t,t;c)=-\log p_\phi(c\mid x_t,t)\). The guided sampler is obtained by adding the external gradient increment to the frozen base field.

3.2 CLIP Guidance

Classifier guidance uses a discriminative model \(p_\phi(c\mid x_t,t)\) that outputs a probability. CLIP guidance 2 replaces this with a more flexible idea: Use a pretrained vision–language model as a differentiable compatibility scorer between an image and text, and backpropagate its score to obtain a guidance direction.

Let \(f_I(\cdot)\) be the CLIP image encoder and \(f_T(\cdot)\) the text encoder. For a text prompt $c$, define an energy on images (or denoised estimates):

\[E_{\text{CLIP}}(x;c) = -\mathrm{sim}\big(f_I(x),\,f_T(c)\big),\]where \(\mathrm{sim}(\cdot,\cdot)\) is typically cosine similarity (or a scaled dot product). Lower energy means better alignment with the prompt. Then the CLIP guidance increment is simply

\[g_{\text{CLIP}}(x,t;c) = -\nabla_x E_{\text{CLIP}}(x;c) = \nabla_x \mathrm{sim}\big(f_I(x),\,f_T(c)\big).\]This fits our unified picture exactly: CLIP is just another choice of energy \(E(x,c)\), hence another instance of energy-gradient steering.

A subtle but important practical point: CLIP is trained on natural images, not heavily noised \(x_t\). Therefore, CLIP guidance is usually applied to a denoised estimate \(\hat{x}_0(x_t,t)\) rather than directly to \(x_t\).

A common choice is to use the diffusion model’s own prediction to form \(\hat{x}_0\). In an \(\epsilon\)-prediction parameterization, one often has the algebraic relation

\[\hat{x}_0(x_t,t) = \frac{x_t - \sigma(t)\,\epsilon_\theta(x_t,t)}{\alpha(t)}.\]Then define the energy at \(\hat{x}_0\):

\[E_{\text{CLIP}}(x_t,t;c) \;\triangleq\; -\mathrm{sim}\big(f_I(\hat{x}_0(x_t,t)),\,f_T(c)\big).\]The guidance vector in \(x_t\)-space is obtained by chain rule:

\[g_{\text{CLIP}}(x_t,t;c) = -\nabla_{x_t} E_{\text{CLIP}}(x_t,t;c) = \left(\frac{\partial \hat{x}_0}{\partial x_t}\right)^{\!\top} \nabla_{\hat{x}_0}\mathrm{sim}\big(f_I(\hat{x}_0),f_T(c)\big).\]This equation is conceptually important: explicit guidance often acts on a “proxy variable” (e.g., \(\hat{x}_0\)) for which the external model is meaningful, then maps gradients back to the actual state \(x_t\).

With the above definition, CLIP guidance is again just

\[u_{\text{ctrl}}(x_t,t;c) = u_{\text{base}}(x_t,t) + \lambda(t)\,g_{\text{CLIP}}(x_t,t;c),\]where \(\lambda(t)\) controls how aggressively we follow the external gradient. In practice, \(\lambda(t)\) is often made time-dependent to avoid over-steering in high-noise regimes and to prevent texture collapse near \(t\approx 0\). (This “guidance scheduling” theme will reappear in Chapter 4–5 in the CFG context.)

3.3 Limitations of Explicit Guidance

Explicit external-gradient guidance is conceptually clean—“define an energy, take its gradient, add it to the sampler.” Yet it exhibits several persistent limitations that shape the modern controllable-generation landscape.

Computational overhead: explicit gradients are expensive. Both classifier guidance and CLIP guidance require computing \(\nabla_{x_t}E(\cdot)\), which means backpropagation at every sampling step. This cost scales with number of diffusion steps, resolution, and the size of the external model (classifier/CLIP/detector). This is one major reason CFG became the default: it approximates guidance using forward passes of the diffusion model itself, avoiding external backprop loops.

Time-dependent reliability: gradients are not equally meaningful across (t). Explicit guidance depends on the external model being meaningful on the inputs it sees.

- A classifier \(p_\phi(c\mid x_t,t)\) must be noise-robust across all $t$.

- CLIP guidance is usually applied on \(\hat{x}_0\), but \(\hat{x}_0\) is itself an estimate whose quality varies with \(t\).

As a result, the guidance may be: too noisy / unstable at high noise levels (early steps), or too sharp / texture-collapsing at low noise levels (late steps), which forces practitioners to introduce heuristic schedules \(w(t)\) or \(\lambda(t)\).

Gradient mismatch: “optimize the scorer” is not always “optimize the sample quality”. External models can be exploited.

- A classifier gradient can drive \(x_t\) into regions that are highly class-confident but not realistic.

- CLIP gradients can encourage “CLIP-adversarial” patterns that raise similarity without improving perceptual fidelity.

From your unified-control viewpoint, this is an energy design issue: an energy can be differentiable and still be a poor proxy for the desired conditional distribution \(p(x\mid c)\).

Over-steering reduces diversity and can distort semantics. Increasing the guidance weight often improves constraint satisfaction but reduces sample diversity, introduces oversaturation / artifacts, and can distort fine details (especially in high-resolution synthesis).

This is the classic “control–diversity” tension: adding a strong external gradient reshapes the trajectory too aggressively and can pull it away from the base model’s natural manifold.

Engineering fragility: preprocessing and scaling matter a lot. CLIP guidance in particular is sensitive to: image normalization and resizing, prompt formatting, augmentation strategies used to stabilize gradients, and how \(\hat{x}_0\) is formed.

All these factors can dominate outcomes, making explicit guidance less “plug-and-play” than it looks on paper.

4. Implicit Guidance

Chapter 3 introduced the most direct control paradigm: explicitly define an external energy \(E(x_t,t;c)\) (classifier, CLIP, reward model, detector) and steer sampling by injecting its gradient \(-\nabla_{x_t}E\). This view is clean and general, but it is often slow (backprop at every step) and fragile (the external gradient may be unreliable across noise levels).

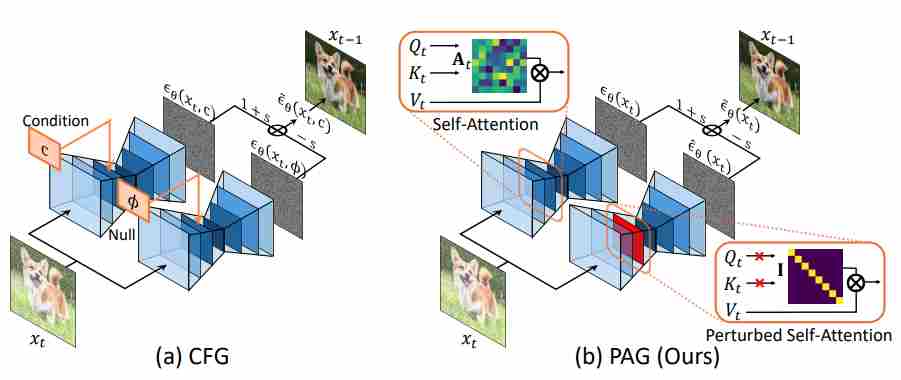

This chapter explains the modern alternative: implicit guidance, where the guidance increment is obtained without an external gradient model. Instead, the generative model itself provides both (i) a baseline direction and (ii) a condition-specific direction, and guidance becomes a simple linear composition of two predictions. The canonical instance is Classifier-Free Guidance (CFG).

4.1 Classifier-Free Guidance (CFG)

Classifier guidance (CG) needs a separate noisy-image classifier \(p_\phi(c\mid x_t,t)\). CFG removes this dependency by ensuring the same network can produce:

- a conditional prediction given \(c\), denoted as \(u_\theta(x_t,t,c)\).

- an unconditional prediction given a null condition \(\varnothing\), denoted as \(u_\theta(x_t,t,\varnothing)\).

typically enabled by random condition dropout during training. Concretely, the model is trained so that with some probability it receives $c$, and otherwise it receives \(\varnothing\). At inference, we evaluate both branches and combine them. CFG constructs a guided field by linear extrapolation:

\[u_{\text{cfg}}(x_t,t;c) = u_{\text{uncond}}(x_t,t) + w\,\Big(u_{\text{cond}}(x_t,t;c)-u_{\text{uncond}}(x_t,t)\Big),\]where

\[u_{\text{uncond}}(x_t,t)\;\triangleq\;u_\theta(x_t,t,\varnothing), \qquad u_{\text{cond}}(x_t,t;c)\;\triangleq\;u_\theta(x_t,t,c).\]So CFG is “implicit guidance” precisely because the increment is obtained by differences of model predictions, rather than by differentiating an external energy.

Modern diffusion systems often predict \(\epsilon_\theta\) (noise), \(\hat{x}_{0,\theta}\) (denoised image), or a velocity/flow field \(v_\theta\). CFG applies to any of these because the mapping between these parameterizations is affine in the prediction, so differences are preserved. A standard practical form (for $\epsilon$-prediction) is:

\[\tilde{\epsilon}_\theta(x_t,t,c) = \epsilon_\theta(x_t,t,\varnothing) + w\Big(\epsilon_\theta(x_t,t,c)-\epsilon_\theta(x_t,t,\varnothing)\Big).\]Similarly, for $x_0$-prediction:

\[\tilde{x}_{0,\theta}(x_t,t,c) = x_{0,\theta}(x_t,t,\varnothing) + w\Big(x_{0,\theta}(x_t,t,c)-x_{0,\theta}(x_t,t,\varnothing)\Big),\]and for flow matching / rectified flow velocity fields:

\[v_{\text{cfg}}(x_t,t,c) = v_\theta(x_t,t,\varnothing) + w\Big(v_\theta(x_t,t,c)-v_\theta(x_t,t,\varnothing)\Big).\]What changes across parameterizations is the exact solver update, not the CFG composition rule.

4.2 The Role of Negative Prompts

Negative prompts are often introduced as a practical heuristic (“tell the model what not to generate”), but under the unified control view they have a clean interpretation: they instantiate a second constraint energy that penalizes undesired concepts.

Let \(c^+\) be the positive prompt and \(c^-\) be a negative prompt. There are two common ways to incorporate \(c^-\) in CFG pipelines.

Negative prompt as the unconditional branch (most common in practice). In many text-to-image systems, the “unconditional” branch is not literally \(\varnothing\), but instead uses a negative text embedding:

\[u_{\text{neg}}(x_t,t)\;\triangleq\;u_\theta(x_t,t,c^-).\]Then guidance is computed as:

\[u_{\text{cfg}}(x_t,t;c^+,c^-) = u_\theta(x_t,t,c^-) + w\Big(u_\theta(x_t,t,c^+)-u_\theta(x_t,t,c^-)\Big).\]This can be understood as steering the sample away from the “negative-conditioned” direction and towards the “positive-conditioned” direction. Operationally, it often yields cleaner images because the baseline already encodes “avoid these artifacts” rather than “do nothing.”

Negative prompt as an explicit subtractive constraint (more explicit, more general). A more explicit formulation treats negative prompting as adding an extra penalty term. In the direction-field view:

\[u_{\text{ctrl}} = u_{\text{uncond}} + \underbrace{w_+\big(u_{+}-u_{\text{uncond}}\big)}_{\text{positive prompts}} - \underbrace{ w_-\big(u_{-}-u_{\text{uncond}}\big)}_{\text{negative prompts}},\]where \(u_{+}=u_\theta(x_t,t,c^+)\) and \(u_{-}=u_\theta(x_t,t,c^-)\). This corresponds to optimizing a combined objective of the form:

\[\log p(c^+\mid x_t)\;-\;\alpha\,\log p(c^-\mid x_t),\]i.e., “increase compatibility with the desired concept while explicitly decreasing compatibility with the undesired concept.” This view is useful because it clarifies that negative prompting is not magic—it is simply multi-objective guidance.

4.3 Limitations of Standard CFG

While Classifier-Free Guidance (CFG) has become the cornerstone of controllable diffusion generation, its simplicity comes at a cost. At high guidance scales, CFG often produces images that appear over-saturated, over-smoothed, or overly stylized—phenomena frequently observed in models such as Stable Diffusion and Imagen.

These artifacts arise from two complementary perspectives: a probabilistic distortion of the underlying data distribution and a geometric deviation of the score function.

Probabilistic Perspective: Distortion of the Conditional Distribution. Under ideal conditions, a diffusion model with $ w = 1 $ samples from the true conditional distribution $ p(x\mid c) $, generating diverse and natural results. However, increasing the guidance weight $ w $ effectively modifies this distribution into a new, biased one:

\[p(x \mid c) \propto p(x \mid c)^w \, p(x)^{1-w}.\]This expression shows that CFG rebalances probability mass between the conditional and unconditional distributions. When $ w > 1 $, the model disproportionately favors samples with maximal conditional likelihood $ p(x\mid c) $ — in other words, the most stereotypical or prototypical examples of condition $ c $. As a result:

Mode collapse and Over-smoothing: The reweighted distribution \(p(x\mid c)^w\) becomes sharper (higher kurtosis), concentrating around a few high-probability modes. The model tends to reproduce “safe,” high-confidence examples and loses interest in natural variations and subtle textures, leading to overly smooth and homogeneous outputs.

Over-saturation: To achieve higher conditional likelihoods, the model exaggerates defining features of the condition. Colors and contrasts are amplified beyond natural levels—blue skies become ultramarine, grass turns neon green, and human skin takes on waxy perfection. The results are visually striking but physically implausible.

Score-Function Perspective: Gradient Amplification and Manifold Departure. In the score-based interpretation, CFG modifies the denoising direction as

\[\begin{align} {\mathrm g}_{\text{total}} & = {\mathrm g}_{\text{uncond}} + w\cdot ({\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}) \\[10pt] & = {\mathrm g}_\text{cond} + (w-1)\cdot ({\mathrm g}_\text{cond} - {\mathrm g}_\text{uncond}). \end{align}\]where \({\mathrm g}_{\text{cond}}\) and \({\mathrm g}_{\text{uncond}}\) denote the conditional and unconditional score estimates, respectively. The vector difference

\[\Delta {\mathrm g} = {\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}\]captures the semantic direction that moves samples toward higher conditional probability regions. The amplification term \((w-1)\Delta {\mathrm g}\) can be viewed as performing gradient ascent on an implicit “guidance energy”:

\[\mathcal{F}_{\text{CFG}} = \tfrac{1}{2}\|{\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}\|^2, \quad \nabla_{ {\mathrm g}_{\text{cond}} }\mathcal{F}_{\text{CFG}} = ({\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}).\]Each CFG update therefore corresponds to one step of gradient ascent:

\[{\mathrm g}_{\text{guided}} = {\mathrm g}_{\text{cond}} + (w-1)\cdot \nabla_{ {\mathrm g}_{\text{cond}} }\mathcal{F}_{\text{CFG}}.\]This means that the model amplifies the difference between conditional and unconditional predictions, effectively pushing denoising updates further along the conditional semantic direction. This implicit gradient-ascent behavior leads to several geometric and perceptual consequences:

Overshooting the data manifold: With small $w$, the update gently follows the manifold of realistic images. As $w$ grows, the ascent step becomes too aggressive, pushing samples beyond the manifold where training data are scarce, leading to unrealistic artifacts.

Gradient amplification and instability: The conditional difference $\Delta {\mathrm g}$ often contains noisy high-frequency components. Multiplying it by $(w-1)$ acts like increasing the learning rate in optimization, causing gradient explosion and unstable dynamics during denoising.

Loss of fine detail and color balance: Once the sample leaves the data manifold, the model’s local score estimates lose reliability. The denoiser “hallucinates” textures and color statistics, resulting in smooth, glossy, or over-saturated appearances.

Beyond the probabilistic and geometric distortions, standard CFG suffers from a more practical issue—its guidance scale $w$ is static across all denoising steps. However, the diffusion process is inherently non-stationary. Empirically, the optimal guidance strength follows a “weak–strong–weak” temporal pattern.

| Phase | Noise Level | Semantic State | Recommended (w(t)) | Reason |

|---|---|---|---|---|

| Early stage (high noise) | \(t\!\approx\!1\!\to\!0.7\) | Almost pure noise; semantics not yet formed; | Low $w$ (≈1–2) | Conditional gradients are noisy and unreliable; strong guidance destabilizes trajectories |

| Middle stage (medium noise) | \(t\!\approx\!0.7\!\to\!0.3\) | Semantic structure emerges | High $w$ (≈5–10) | Conditional direction becomes meaningful; stronger guidance enhances alignment |

| Late stage (low noise) | \(t\!\approx\!0.3\!\to\!0\) | Image approaches data manifold | Low $w$ (≈1–3) | Over-guidance distorts fine details and color balance |

early steps require mild conditioning for stability: in the early stage, the input is pure noise with almost no semantic information, and the gradient is extremely unstable, requiring small steps to proceed.

mid steps demand strong semantic enforcement for content alignment: in the mid-term stage, which is the critical moment for semantic guidance, it is necessary to increase the guidance.

late steps prefer gentle guidance to preserve realism. in the final stage, the semantic space stabilizes, and the focus shifts to detail refinement. Excessive step sizes or strong conditions may disrupt natural textures (such as causing waxy skin or color saturation) and could also lead to deviations from the data manifold. Therefore, small steps are required to proceed.

5. Advances in Classifier-Free Guidance

In chapter 4, we identified key limitations of standard CFG. To overcome these challenges, a series of CFG variants have emerged, each targeting different aspects of the problem. All these approaches together represent a coherent evolution toward adaptive, geometry-aware, and distribution-consistent guidance.

In the following sections, we examine each of these improvements in detail, highlighting their motivations, formulations, and empirical effects.

5.1 Geometric & Numerical Corrections (Staying on-Manifold)

In Sec. 4, we saw that standard CFG can become brittle at high guidance: The root cause is that a high guidance scale will make the model deviate from the true manifold, thus resulting in over-saturation, artifacts, and other undesirable phenomena in the generated outputs.

To make “off-manifold” concrete, it helps to write guidance in denoised space (a.k.a. the Tweedie / $x_0$ estimate). Let $\hat{x}_0^{c}(x_t)$ and $\hat{x}_0^{\varnothing}(x_t)$ be the conditional and unconditional denoised predictions at time $t$. The analysis can be conducted from the following three perspectives.

Extrapolation is built into large guidance. Standard CFG forms a linear mixture in prediction space:

\[\begin{align} \hat{x}_0^{\omega}(x_t) \;& =\; (1-\omega)\,\hat{x}_0^{\varnothing}(x_t) + \omega\,\hat{x}_0^{c}(x_t) \\[10pt] \;& =\; \hat{x}_0^{\varnothing}(x_t) + \omega\big(\hat{x}_0^{c}(x_t)-\hat{x}_0^{\varnothing}(x_t)\big). \end{align}\]When $\omega>1$, this is extrapolation beyond the segment between $\hat{x}_0^{\varnothing}$ and $\hat{x}_0^{c}$. A useful geometric picture (used explicitly by CFG++) is: the data manifold is piecewise linear locally, and extrapolation pushes the iterate outside that local linear patch. Once outside, the denoiser/score is not well-calibrated, so the dynamics become unreliable (hallucinated textures, weird colors, etc.).

- Renoising with guided noise creates a systematic drift. Sampling updates are not “denoise only”; they also contain a renoising component (e.g., DDIM has an explicit renoise term; higher-order solvers have analogous correction terms). Under VP-DDPM notation, DDIM can be written as:

- denoise step: compute \(\hat{x}_0(x_t)\).

- renoise step: map \(\hat{x}_0(x_t)\) back to \(x_{t-1}\) using a noise term.

The key insight emphasized by CFG++ is: CFG modifies the denoiser output and the renoising component. If the renoising part is also guided, it can introduce a nonzero offset from the “correct” manifold-consistent update, compounding across steps.

Optimization lens: CFG behaves like an overly-large gradient step. From the “energy lens” (Sec. 1.3), think of guidance as performing a gradient step that improves condition alignment: the direction is “semantic”, and the scale $\omega$ behaves like a step size / learning rate

Large $\omega$ therefore means overshooting: even if the direction is correct, the step can jump outside the calibrated neighborhood.

Conclusion: high-guidance artifacts are not mysterious—mathematically they come from (1) extrapolation, (2) renoising drift, and (3) too-large optimization steps.

5.1.1 Adaptive Projected Guidance (APG)

Idea. APG revisits CFG through the lens of gradient steps and shows that oversaturation (artifacts) are strongly tied to how the update direction is applied at high $w$. It proposes a lightweight inference-time modification that combines rescaling, projection, and a (reverse) momentum-style correction, allowing higher effective guidance without blowing up the trajectory. 3

Write the standard guidance difference in denoised prediction space:

\[\Delta_t \;=\; \hat{x}_0^{c}(x_t) - \hat{x}_0^{\varnothing}(x_t).\]CFG injects $(\omega-1)\Delta_t$ on top of the conditional branch. APG’s key observation is a directional decomposition: decompose $\Delta_t$ into a component parallel to the conditional denoised prediction $\hat{x}_0^{c}$ and a component orthogonal to it:

\[\Delta_t \;=\; \Delta_t^{\parallel} + \Delta_t^{\perp}, \qquad \Delta_t^{\parallel} = \operatorname{proj}_{\hat{x}_0^{c}}(\Delta_t), \qquad \Delta_t^{\perp} = \Delta_t - \Delta_t^{\parallel}.\]where

\[\Delta_t^{\parallel} = \operatorname{proj}_{\hat{x}_0^{c}}(\Delta_t) = \frac{\langle \Delta_t,\hat{x}_0^{c}\rangle}{\|\hat{x}_0^{c}\|^2}\,\hat{x}_0^{c},\]- the parallel component tends to behave like a gain / amplification along the current denoised signal, and is strongly correlated with oversaturation;

- the orthogonal component more often improves structure/quality without the same saturation blow-up.

APG replaces the raw update by a rebalanced update:

\[\widetilde{\Delta}_t(\eta) \;=\; \Delta_t^{\perp} + \eta\,\Delta_t^{\parallel},\]with $\eta < 1$, the purpose is to suppress the parallel components, and then uses the full guidance direction as follows

\[\hat{x}_{0,\text{APG}}(x_t) \;=\; \hat{x}_0^{c}(x_t) + (\omega-1)\,\widetilde{\Delta}_t(\eta).\]APG further treats the guidance increment like an optimization step and stabilizes its magnitude:

Rescaling or norm thresholding. Clip or shrink the guidance vector so its norm does not explode:

\[\widetilde{\Delta}_t \leftarrow \widetilde{\Delta}_t \cdot \min\Big(1,\; \frac{\tau}{\|\widetilde{\Delta}_t\|}\Big),\]so the effective step size remains bounded.

Reverse momentum ($\beta < 0$). Maintain a running average of recent updates and push away from repeated directions:

- standard momentum accumulates past directions ($\beta > 0$),

- reverse momentum ($\beta < 0$) discourages repeatedly amplifying the same harmful direction, improving stability in later steps.

Key Viewpoint: A subtle but crucial point: APG is not “project any prediction head”. The projection must be applied to the denoised prediction $\hat{x}_0$ (or $D_\theta$), otherwise the effect can be negligible. So if your model predicts $\epsilon$ (or $v$), you must convert to $\hat{x}_0$ first (Tweedie-style): $$ \hat{x}_0(x_t) = \frac{x_t - \sigma_t \,\hat{\epsilon}(x_t)}{\alpha_t}, $$ then apply APG in $\hat{x}_0$ space, then convert back if the sampler needs $\epsilon$.

5.1.2 CFG++: “CFG problems come from off-manifold, not diffusion”

CFG++ makes a strong claim: many CFG pathologies are better explained by off-manifold dynamics induced by guidance, rather than a fundamental flaw of diffusion sampling. It highlights two concrete causes:

- $\omega>1$ extrapolation beyond local manifold linearity,

- guided renoising drift (renoising introduces a nonzero offset from the manifold-consistent update).

CFG++ looks like “just replace extrapolation by interpolation”. Concretely, CFG++ replaces the CFG denoised prediction by an interpolation:

\[\hat{x}_0^{\lambda}(x_t) \;=\; (1-\lambda)\,\hat{x}_0^{\varnothing}(x_t) + \lambda\,\hat{x}_0^{c}(x_t), \qquad \lambda\in[0,1].\]So the method forbids extrapolation by construction. Then it performs sampling as:

- denoise using $\hat{x}_0^{\lambda}(x_t)$ (nudged toward the condition),

- renoise using the unconditional noise (keep the prior-consistent stochasticity / solver correction).

For DDIM-style updates, the canonical form is:

\[x_{t-1} = \sqrt{\bar{\alpha}_{t-1}}\,\hat{x}_0^{\lambda}(x_t) \;+\; \sqrt{1-\bar{\alpha}_{t-1}}\,\hat{\epsilon}_{\varnothing}(x_t),\]i.e. conditional in the denoise term, unconditional in the renoise term.

5.1.3 TCFG / TAG: Off-Manifold Components

APG and CFG++ can be seen as “projecting the CFG update back to a safer region” (typically in (\hat x_0)-space). A closely related geometric line makes the mechanism even more explicit:

- The harmful part of strong guidance is an off-manifold component of the update;

- keep / emphasize the on-manifold component (semantic refinement), and suppress or correct the off-manifold drift.

This viewpoint is operationalized by decomposing the score / increment into geometry-aware components and then reweighting them.

A unifying decomposition

Let a base sampler (DDIM / ODE solver / EDM step, etc.) produce a provisional update

\[\Delta_t^{\text{base}} \;:=\; x_{t-1}^{\text{base}} - x_t.\]A geometry-aware method introduces a reference direction \(u_t\) and splits the update into

\[\Delta_t^{\parallel} \;:=\; \Pi_{\parallel}(u_t)\,\Delta_t^{\text{base}},\qquad \Delta_t^{\perp} \;:=\; \Pi_{\perp}(u_t)\,\Delta_t^{\text{base}},\qquad \Delta_t^{\text{base}} = \Delta_t^{\parallel} + \Delta_t^{\perp},\]where \(\Pi_{\parallel},\Pi_{\perp}\) are orthogonal projectors (e.g., “radial vs tangential”, or “normal vs tangent” w.r.t. an intermediate manifold). The off-manifold drift typically manifests as:

- misaligned tangential components (moving sideways away from the conditional manifold), and/or

- wrong-radius / wrong-time components (moving to a point that looks like it belongs to a different noise level).

Below are three representative routes that explicitly implement “harmful = off-manifold”.

(1) TCFG: drop misaligned tangential components via SVD

Core intuition. In CFG we combine unconditional and conditional scores:

\[\widehat s_{\text{CFG}} = s_{\varnothing} + w\,(s_c - s_{\varnothing}) = (1-w)\,s_{\varnothing} + w\,s_c.\]When \(w\) is large, any misaligned component in \(s_{\varnothing}\) can be repeatedly injected at every step, accumulating as an off-manifold drift. TCFG 4 proposes to filter out the “less-aligned (tangential)” part of the unconditional score by SVD, so that the remaining unconditional component is better aligned with the conditional manifold.

Concretely, at each step we form a score matrix by concatenating the unconditional and conditional scores and perform SVD (details in the paper):

\[S_t := [\,s_{\varnothing}\;\; s_c\,] \in \mathbb{R}^{d\times 2}, \quad S_t = U\,\Sigma\,V^\top.\]We keep the leading singular vectors (interpreted as well-aligned “normal” directions) and drop the rest (interpreted as misaligned tangential directions):

\[\widetilde s_{\varnothing} := U_{[:,1:r]}\,U_{[:,1:r]}^\top\,s_{\varnothing},\]then run CFG using the filtered unconditional score

\[\widehat s_{\text{TCFG}} := \widetilde s_{\varnothing} + w\,(s_c - \widetilde s_{\varnothing}).\]This is exactly the paper’s narrative: “high singular-value subspace = shared / normal component; low singular-value subspace = tangential misalignment”, and TCFG “drops” the low-SV part of \(s_{\varnothing}\) to reduce off-manifold sampling.4

Why this is a clean ‘off-manifold’ story. The method does not change the conditional score \(s_c\); instead it prevents unconditional tangential components from fighting the conditional manifold direction over time. The paper reports that the similarity of singular vectors is high for dominant components and low for the rest, motivating the filtering.4

(2) TAG (Tangential Amplifying Guidance): preserve the radial term, amplify tangential refinement

There is also a different paper that uses the acronym TAG, standing for Tangential Amplifying Guidance. Despite the name collision with “Temporal Alignment Guidance” (below), it is deeply aligned with the same geometric thesis: separate an update into “safe vs harmful” components and reweight them.

The key move is to treat the noise schedule / SNR trajectory as prescribing a radial evolution, and interpret semantic structure as living in the tangential part. Using the current state as reference, define the unit direction and projectors5

\[\bar x_t := \frac{x_t}{\|x_t\|},\qquad \Pi_{\parallel} := \bar x_t\,\bar x_t^\top,\qquad \Pi_{\perp} := I - \Pi_{\parallel}.\]Let \(\Delta_t^{\text{base}}\) be the base increment from any solver. TAG reweights the decomposition: 5

\[\Delta_t^{\text{TAG}} := \lambda_{\parallel}\,\Pi_{\parallel}\,\Delta_t^{\text{base}} \; +\; \lambda_{\perp}\,\Pi_{\perp}\,\Delta_t^{\text{base}}, \qquad x_{t-1} := x_t + \Delta_t^{\text{TAG}}.\]In practice, the guidance effect is obtained by keeping the radial component nearly unchanged while amplifying the tangential component (\(\lambda_{\perp}>1\)), which the paper argues helps trajectories move along high-density directions (reducing hallucinations / mode interpolation) without breaking the schedule’s radius evolution.5

(3) TAG (Temporal Alignment Guidance): correct “wrong-time” off-manifold drift

A second “TAG” refers to Temporal Alignment Guidance: On-Manifold Sampling in Diffusion Models. Its definition of “off-manifold” is time-mismatch:

- during guidance (especially arbitrary / composite guidance), the state \(x_t\) may drift to look like it belongs to another marginal \(p_{t'}\),

- this time gap correlates with sample fidelity degradation.6

The method trains (or uses) a time predictor \(\tau_\phi(x_t)\) that estimates the current diffusion time given \(x_t\). Then it builds a temporal alignment term by differentiating a time-consistency loss w.r.t. \(x_t\):

\[\mathcal{T}(x_t, t) \;:=\; -\nabla_{x_t}\,\ell_t\big(\tau_\phi(x_t),\,t\big).\]This term is added as an attractive force that pulls $x_t$ back toward the correct time manifold during sampling (the paper presents an algorithmic template that combines property guidance and the temporal alignment term at each step).6

Key Viewpoint: TCFG and TAG look different on the surface (SVD filtering vs. projector reweighting vs. time correction), but they share the same geometric diagnosis: the guidance residual contains both on-manifold refinement and off-manifold drift.

A helpful implementation lens is: replace “scale the whole residual” with “scale only the right subspace”.

5.2 Internal Contrast Self-Guidance

Standard classifier-free guidance (CFG) assumes you can obtain a meaningful unconditional baseline \(u_{\varnothing}\), which practically implies (i) training with conditional dropout (null token), and (ii) two forward passes per denoising step. This is not just an efficiency issue—it becomes a methodological constraint:

- Architecture / training mismatch. Some pipelines (fine-tuned adapters, domain-specific conditional models, restoration pipelines, or non-text conditions) do not naturally expose a clean “null conditioning” behavior that matches the intended baseline.

- Task discrepancy. Even when implemented with a null token, \(u_{\varnothing}\) is trained for a different task (marginalized over conditions), so the CFG difference can mix multiple effects (prompt alignment, truncation / low-temperature sampling, and solver drift), and may overshoot at large \(\omega\).

- Unconditional generation & downstream tasks. CFG is inherently defined via “conditional minus unconditional”; it is therefore awkward (or unusable) when the goal is to improve unconditional sampling or when “empty prompt” does not mean “unconditional” (e.g., ControlNet with empty prompts, restoration). PAG explicitly targets this gap.

Internal-contrast self-guidance replaces the classic cond–uncond contrast with a cond–(cond-but-worse) contrast constructed internally from the model.

5.2.1 A unifying template: “good vs. bad” instead of “cond vs. uncond”

Let \(u_\theta(x_t,t,c)\) denote the model prediction in whatever parameterization your sampler uses (e.g., \(\hat{x}_0\)-prediction, \(\epsilon\)-prediction, or \(v\)-prediction). Standard CFG can be written abstractly as

\[u_{\text{CFG}}(x_t,t,c) \;=\; u_\theta(x_t,t,\varnothing) \;+\; \omega_{\text{cfg}}\Big(u_\theta(x_t,t,c)-u_\theta(x_t,t,\varnothing)\Big).\]Internal-contrast self-guidance introduces an internal degradation operator \(\mathcal{D}\) that produces a worse prediction while keeping the same condition:

\[u_\theta^{-}(x_t,t,c) \;\triangleq\; u_\theta\big(\mathcal{D}(x_t),\;t,\;c\big), \qquad u_\theta^{+}(x_t,t,c)\triangleq u_\theta(x_t,t,c).\]Then the guided prediction is

\[u_{\text{IC}}(x_t,t,c) \;=\; u_\theta^{+}(x_t,t,c) \;+\; \omega_{\text{ic}} \Big(u_\theta^{+}(x_t,t,c)-u_\theta^{-}(x_t,t,c)\Big). \label{eq:IC}\]In the continuous-time view, guidance is typically interpreted as modifying the score / vector field by taking a difference of two scores. For any two (time-marginal) densities \(p_+(x)\) and \(p_-(x)\),

\[\nabla_x \log p_+(x) - \nabla_x \log p_-(x) \;=\; \nabla_x \log \frac{p_+(x)}{p_-(x)}.\]CFG corresponds to \((p_+,p_-)=(p(\cdot\mid c)\,,p(\cdot))\). Internal-contrast self-guidance corresponds to \((p_+,p_-)=(p(\cdot\mid c),\,p_{\text{bad}}(\cdot\mid c))\), where \(p_{\text{bad}}\) is the implicit distribution induced by the degraded internal computation (blurred regions, perturbed attention, smoothed attention energy, or a weaker model). This makes explicit the shared philosophy:

- CFG: “push away from unconditional behavior.”

- Internal contrast: “push away from a known-bad conditional behavior.”

Key Viewpoint: SAG / PAG / SEG / AutoGuidance all instantiate the same template \ref{eq:IC}. The only difference is how to construct the “bad branch” \(u_\theta^{-}\): input-space corruption (SAG), attention-path corruption (PAG), energy-curvature smoothing (SEG), or a separately trained inferior model (AutoGuidance).

5.2.2 Self-Attention Guidance (SAG)

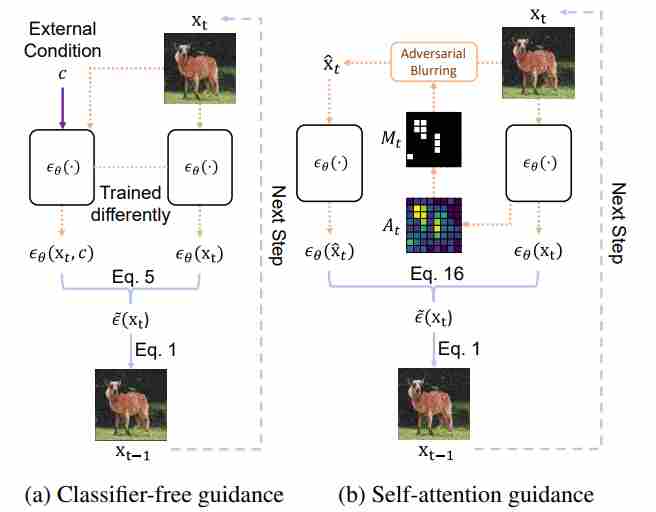

Idea. SAG extracts a saliency signal from self-attention maps inside the diffusion U-Net and constructs a “bad” sample by blurring/corrupting the regions the model attends to, then uses the prediction difference as guidance. The paper describes it as “adversarially blurs only the regions that diffusion models attend to at each iteration and guides them accordingly,” and shows it can be combined with conventional guidance for further gains.

How to construct the bad branch.

- Run the model once to obtain self-attention maps \(A_t\) (or a derived mask \(M_t\)).

Construct a perturbed latent \(\tilde{x}_t\) by blurring/noising the attended regions:

\[\begin{align} \tilde{x}_t \; & =\; \operatorname{Blur}(x_t\;,M_t) \\[10pt] \quad\text{or}\quad \tilde{x}_t \; & =\; (1-M_t)\odot x_t + M_t\odot \operatorname{Blur}(x_t). \end{align}\]- Run the model again (same $c$) to get the “bad” prediction \(u_\theta(\tilde{x}_t,t,c)\).

Guidance form. In the generic template:

\[u_{\text{SAG}} = u_\theta^{+} + \omega_{\text{sag}}(u_\theta^{+}-u_\theta^{-}).\]where

\[u_\theta^{+}=u_\theta(x_t,t,c),\qquad u_\theta^{-}=u_\theta(\tilde{x}_t,t,c),\]This matches our existing summary

\[g_{\text{SAG}}\approx u_\theta(x_t,t,c)-u_\theta(\tilde{x}_t,t,c)\]5.2.3 Perturbed Attention Guidance (PAG)

Idea. PAG constructs the “bad branch” by perturbing self-attention computation paths directly, instead of corrupting the latent in pixel/latent space. Concretely, it generates degraded-structure intermediates by substituting selected self-attention maps with an identity matrix, then guides sampling away from those degraded predictions.

PAG is explicitly motivated by the fact that CG/CFG “are often not applicable in unconditional generation or downstream tasks such as image restoration,” and targets improvements in both conditional and unconditional settings without extra modules/training.

How to construct the bad branch.

Let a self-attention layer produce attention weights

\[W \;=\; \operatorname{softmax}!\Big(\frac{QK^\top}{\sqrt{d}}\Big)\in\mathbb{R}^{N\times N}.\]PAG defines a perturbed attention \(\widetilde{W}\), or applied only to a subset of layers/heads/tokens. Running the U-Net with \(\widetilde{W}\) removes token-to-token mixing and yields a structurally degraded prediction \(u_\theta^{-}\).

Guidance form.

\[u_{\text{PAG}} = u_\theta^{+} + \omega_{\text{pag}}(u_\theta^{+}-u_\theta^{-}).\]where

\[u_\theta^{+}=u_\theta(x_t,t,c;,W),\qquad u_\theta^{-}=u_\theta(x_t,t,c;,\widetilde{W}),\]5.2.4 Smoothed Energy Guidance (SEG)

Idea. SEG makes the “bad/unconditional-like” branch by smoothing the attention energy landscape, specifically by reducing the curvature of the attention energy. It is described as a “training- and condition-free approach” that (i) defines an energy for self-attention, (ii) reduces its curvature, and (iii) uses the resulting output as an unconditional prediction.

How to construct the bad branch (high-level).

- Define an attention-energy view of the self-attention mechanism.

- Apply Gaussian smoothing (controlled by a kernel parameter) to reduce curvature while keeping guidance scale fixed.

- Implement the smoothing efficiently via query blurring, which is stated to be equivalent to blurring the full attention weights but without quadratic token complexity.

Guidance form. SEG naturally fits the internal-contrast template by treating the smoothed-attention output as \(u^{-}\):

\[u_\theta^{+}=u_\theta(x_t,t,c;,\text{original attention}),\qquad u_\theta^{-}=u_\theta(x_t,t,c;,\text{smoothed attention}),\]and the total direction:

\[u_{\text{SEG}} = u_\theta^{+} + \omega_{\text{seg}}(u_\theta^{+}-u_\theta^{-}).\]Interpretationally: SAG/PAG degrade by removing information (blur or identity attention), while SEG degrades by flattening the attention energy landscape—reducing sharp, high-curvature “overconfident” attention peaks that often correlate with side effects at high guidance.

5.2.5 AutoGuidance

Idea. AutoGuidance replaces the unconditional baseline by a bad version of the model itself (smaller and/or under-trained), using the same conditioning, thereby avoiding the “task discrepancy” between conditional and unconditional branches. The paper explicitly states that it guides using “a smaller, less-trained version of the model itself rather than an unconditional model,” and that it “does not suffer from the task discrepancy problem because we use an inferior version of the main model itself as the guiding model, with unchanged conditioning.”

How to construct the bad branch. Train or obtain an inferior model \(u_\phi\) that solves the same conditional task but is deliberately weaker (capacity/time degradations). Then:

\[u_\theta^{+}(x_t,t,c)=u_\theta(x_t,t,c), \qquad u_\theta^{-}(x_t,t,c)=u_\phi(x_t,t,c).\]Guidance form (CFG with “bad model” instead of “uncond”).

\[u_{\text{AG}}(x_t,t,c) \;=\; u_\theta(x_t,t,c) \;+\; \omega_{\text{ag}}\Big(u_\theta(x_t,t,c)-u_\phi(x_t,t,c)\Big).\]The key intuition given in the paper: when the strong and weak models agree, the correction is small; when they disagree, the difference indicates a direction toward better samples.

5.3 Dynamic Guidance Scheduling and Calibration

Standard CFG uses a single global guidance weight throughout the entire reverse-time trajectory:

\[s_{\mathrm{cfg}}(x_t,t,y)=s_u(x_t,t)+w\big(s_c(x_t,t,y)-s_u(x_t,t)\big),\qquad w \ge 1.\]This “fixed-$w$” design is simple, but it implicitly assumes that:

1) the conditional–unconditional discrepancy is equally informative at all $t$, and

2) the magnitude of the guidance increment is naturally calibrated across timesteps and prompts.

Both assumptions are empirically false and conceptually misaligned with diffusion/flow sampling dynamics 7 8 9.

In the early high-noise stage, the state is dominated by noise:

\[x_t=\alpha_t x_0+\sigma_t\varepsilon,\qquad \mathrm{SNR}(t)=\frac{\alpha_t^2}{\sigma_t^2}\ll 1.\]and the conditional–unconditional score difference is

\[s_c-s_u=\nabla_{x_t}\log p_t(y\mid x_t).\]which does not provide fine mode-specific semantics; instead, it approximately reduces to a coarse global drift

\[s_c-s_u \approx \frac{\alpha_t}{\sigma_t^2}(\bar\mu_y-\mu),\]where \(\bar\mu_y\) is the class-weighted conditional mean and \(\mu\) is the unconditional mean. Therefore, large guidance at this stage mainly amplifies a global bias term rather than useful fine-grained semantic structure. As a result, CFG induces early direction shift and norm inflation, steering trajectories toward the scaled mean \(\omega\bar\mu_y\). If the conditional distribution contains a dominant mode, this early displacement reduces later occupancy of weaker attraction basins, so diversity is already compromised before genuine mode separation becomes active.

In the intermediate denoising regime, the conditional posterior over semantic modes becomes most sensitive to the current state $x_t$. In a two-mode mixture, if \(r_1(x_t)\) denotes the posterior responsibility of mode 1 along the discriminative direction \(u=\langle x_t,\mu_1-\mu_2\rangle\), then

\[\frac{\partial r_1}{\partial u}=a_t,r_1(1-r_1),\]where \(a_t\) increases as noise decreases, while \(r_1(1-r_1)\) is maximal before mode assignment saturates. Hence the intermediate stage is exactly where the conditional correction becomes most informative and mode-selective. Amplifying it with a larger guidance scale therefore helps trajectories commit more decisively to the appropriate attraction basin, rather than merely inducing a global bias as in the early stage.

In the high-SNR tail regime, posterior mode responsibilities are already saturated, so guidance no longer meaningfully contributes to mode selection. Locally inside a selected basin $k$, the conditional score reduces to a linear restoring field

\[s_c(x_t,t,y)\approx -\frac{x_t-\alpha_t\mu_k}{\alpha_t^2\sigma_y^2+\sigma_t^2},\]i.e., a force pulling the trajectory toward the local mode center. Since this local conditional field is sharper than the unconditional one, increasing the guidance scale mainly strengthens the contraction rate

\[\kappa_{\mathrm{cfg}}(t)=\lambda_c(t)+(w-1)\big(\lambda_c(t)-\lambda_u(t)\big),\]which accelerates the collapse of nearby trajectories within the same mode. Thus, large late-stage guidance contributes little new semantic information but increasingly suppresses fine-grained variability, making a low guidance scale the principled choice in the tail stage.

5.3.1 Adaptive Guidance (AG)

An key insight in AG 10 is that as the number of sampling steps increases, the similarity between the outputs of the unconditional branch and the conditional branch becomes progressively higher.

Adaptive Guidance (AG) 10 leverages this observation by dynamically deciding when to apply CFG and when to rely solely on the conditional branch. Let

\[\gamma_t = \frac{\epsilon_\theta(x_t, t, c) \cdot \epsilon_\theta(x_t,t, \varnothing)}{\|\epsilon_\theta(x_t, t, c)\|\cdot \|\epsilon_\theta(x_t,t, \varnothing)\|}\]denote the cosine similarity between conditional and unconditional network outputs. Empirically, $\gamma_t$ increases monotonically over time and approaches 1 toward the end of the process, indicating nearly perfect alignment.

AG introduces a threshold $\bar{\gamma}\in[0,1]$ to determine the switch point:

When $\gamma_t < \bar{\gamma}$, CFG is executed as usual—combining conditional and unconditional predictions to strongly enforce textual conditioning.

\[{\mathrm g}_{\text{total}} = {\mathrm g}_{\text{uncond}} + w\cdot ({\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}})\]When $\gamma_t \ge \bar{\gamma}$, the model stops computing the unconditional branch, using only the conditional output for subsequent steps.

\[{\mathrm g}_{\text{total}} = {\mathrm g}_{\text{cond}}\]

This adaptive truncation maintains the semantic fidelity established during early denoising while removing redundant computations in the later phase.

5.3.2 β-CFG:

A fixed guidance scale $w$ across all denoising steps is inappropriate. β-CFG 11 introduces a time-dependent and normalized guidance mechanism to stabilize this process. It replaces the constant $w$ with a dynamic function $ \beta(t) $ and introduces a normalization exponent $ \gamma $ to control the magnitude of the conditional correction:

\[{\mathrm g}_{\text{total}} = {\mathrm g}_{\text{uncond}} + \beta(t)\,\frac{w\cdot ({\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}})}{\|{\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}\|^\gamma}\]This modification simultaneously ensures the manifold structure at both ends of the diffusion path and gradient stability.

Beta-Distribution Scheduling. The first key component is the β-function weighting:

\[\beta(t) = \frac{t^{a-1}(1-t)^{b-1}}{B(a,b)}, \quad a,b>1.\]This curve is zero at both ends $(\beta(0)=\beta(1)=0)$ and peaks in the middle, forming a “weak–strong–weak” pattern. Such a schedule satisfies the boundary conditions required to keep trajectories on the data manifold:

Hence, early and late denoising steps use almost pure unconditional predictions (stabilizing start and end), while the mid-range receives strong conditional influence—the region most responsible for semantic formation. This design directly addresses the three-phase mechanism of diffusion sampling:

$\gamma$-Normalization: Gradient Rescaling Perspective

The second component, $\gamma$-normalization, controls the effective step size of the guidance. As we had discussed before, CFG can be viewed as performing gradient ascent on an implicit energy function

\[\mathcal{F}_{\text{CFG}} = \tfrac{1}{2}\|{\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}\|^2, \quad \nabla_{ {\mathrm g}_{\text{cond}} }\mathcal{F}_{\text{CFG}} = ({\mathrm g}_{\text{cond}} - {\mathrm g}_{\text{uncond}}).\]where the difference \(\Delta=\nabla_{ {\mathrm g}_{\text{cond}} }\mathcal{F}_{\text{CFG}}\) represents the gradient ascent direction. Multiplying by \(1/\|\Delta\|^\gamma\) therefore acts as a rescaling schedule: Large $|\Delta|$: down-scale the update to prevent gradient explosion, while Small $|\Delta|$ amplify subtle conditional cues to avoid vanishing.

This normalization stabilizes the dynamics of CFG, keeping the trajectory within a numerically safe and geometrically valid region.

5.3.3 CFG-Zero★ (Flow Matching): projection-based rescale + early-step zero-init

CFG-Zero★ targets flow-matching / rectified-flow style samplers, where CFG is applied to a predicted velocity field (or an equivalent direction). The central idea is that the unconditional branch can be recalibrated to better match the conditional prediction via a 1D projection, producing a more stable mixture.

Optimized scale via projection. Consider scaling the unconditional prediction by a scalar $s_t$:

\[\tilde{u}_u \triangleq s_t\, u_\theta(x_t,t,\varnothing).\]Choose $s_t$ by minimizing the squared mismatch between conditional and scaled-unconditional predictions:

\[s_t^\star = \arg\min_{s}\; \big\|u_\theta(x_t,t,c) - s\,u_\theta(x_t,t,\varnothing)\big\|_2^2.\]This is a one-variable least-squares problem. Expanding and differentiating:

\[\begin{aligned} f(s) &= \|u_c - s u_u\|_2^2 = \langle u_c - s u_u,\; u_c - s u_u\rangle,\\[10pt] \Longrightarrow\quad \frac{d f}{ds} &= -2\langle u_u,\;u_c - s u_u\rangle = -2\langle u_u,u_c\rangle + 2s\|u_u\|^2. \end{aligned}\]Setting $df/ds=0$ gives the closed form:

\[s_t^\star= \frac{\langle u_\theta(x_t,t,c),\;u_\theta(x_t,t,\varnothing)\rangle} {\|u_\theta(x_t,t,\varnothing)\|_2^2}.\]CFG is then applied between $u_c$ and the scaled unconditional $\tilde{u}_u$:

\[u_{\text{CFG-Zero★}} = \tilde{u}_u + \omega\Big(u_c-\tilde{u}_u\Big) = s_t^\star u_u + \omega\Big(u_c - s_t^\star u_u\Big).\]When $s_t^\star\approx 1$, it reduces to standard CFG. When $s_t^\star$ differs from 1, the method effectively performs a “projection-calibrated” mixture.

Zero-init at the earliest steps. Empirically, the first reverse steps can be particularly sensitive in flow-matching samplers. CFG-Zero★ therefore also advocates an early-step suppression (a “zero-init”) so that the dynamics do not overreact to poorly calibrated directions at the very beginning. In the unifying lens of (Dyn-CFG), this is again a stage-aware schedule:

\[\text{very-early steps: }\;\omega(t)\approx 0 \quad\Longrightarrow\quad u_{\text{dyn}}\approx \tilde{u}_u \;\text{(or even }0\text{)}.\]

6. Guidance via Measurements (Inverse Problems)

This chapter covers measurement-conditioned generation, where the “condition” is not semantic text/class labels, but observations produced by a known forward process (blur kernel, downsampling, masking, tomography operator, Fourier magnitude, etc.). Since we will discuss inverse problems in depth elsewhere, we only establish the unifying lens and the canonical inference-time templates used by diffusion/flow samplers. For more details, please check the following paper:

6.1 Problem Setup: Forward Model and Posterior

Let the unknown clean signal/image be \(x\in\mathbb{R}^d\). Measurements \(y\) are generated by a (typically known) operator:

\[y = A(x) + \eta, \qquad \eta \sim \mathcal{N}(0,\sigma_y^2 I),\]where \(A(\cdot)\) can be linear (matrix \(A\)) or nonlinear (e.g., phase retrieval magnitude). The inverse problem is to sample from (or approximate) the posterior

\[p(x \mid y) \;\propto\; p(x)\,p(y\mid x),\]where \(p(x)\) is the learned data prior (captured implicitly by the pretrained diffusion/flow model), and \(p(y\mid x)\) is the measurement likelihood induced by \(A\).

6.2 From “Energy Guidance” to Measurement Guidance

A key reason measurement guidance naturally appears as an additive correction is the log-posterior decomposition:

\[\nabla_x \log p(x\mid y) = \nabla_x \log p(x) + \nabla_x \log p(y\mid x).\]Equivalently, define a measurement energy

\[E_{\text{meas}}(x;y) \;=\; -\log p(y\mid x) \quad(\text{up to a constant}),\]then

\[\nabla_x \log p(x\mid y) = \nabla_x \log p(x) - \nabla_x E_{\text{meas}}(x;y).\]In diffusion / flow sampling, we do not operate directly on clean \(x_0\), but on noisy states \(x_t\). A practical and widely used template is to impose measurement consistency through a proxy clean estimate \(\widehat{x}(x_t,t)\) (often \(\hat{x}_0(x_t,t)\)):

\[\begin{align} & E_{\text{meas}}(x_t,t;y) \;\propto\; \big\|A\,\widehat{x}(x_t,t) - y\big\|_2^2 \\[10pt] \quad\Rightarrow\quad & u_{\text{ctrl}}(x_t,t) \;=\; u_{\text{base}}(x_t,t)\;-\;\lambda(t)\,\nabla_{x_t}E_{\text{meas}}(x_t,t;y). \end{align}\]Interpretation. Compared to CFG (which injects a semantic increment), measurement guidance injects a data-consistency increment. Both are instances of “base dynamics + guided correction,” but the guidance signal here is determined by physics / measurement operators rather than learned \(p(c\mid x)\).

6.3 Practical Forms of the Measurement Gradient

For Gaussian noise and linear \(A\), a common choice is

\[E_{\text{meas}}(x_t,t;y) = \frac{1}{2\sigma_y^2}\,\|A\,\widehat{x}(x_t,t) - y\|_2^2.\]Then, by chain rule,

\[\nabla_{x_t}E_{\text{meas}} = \frac{1}{\sigma_y^2}\,J_{\widehat{x}}(x_t,t)^\top\,A^\top\big(A\,\widehat{x}(x_t,t)-y\big),\]where \(J_{\widehat{x}}\) is the Jacobian of \(\widehat{x}\) wrt \(x_t\). In implementations one typically avoids explicit Jacobians and uses autodiff to backprop through \(\widehat{x}\) and $A$.

For nonlinear \(A(\cdot)\), replace \(A\,\widehat{x}\) by \(A(\widehat{x})\) and backprop through \(A(\cdot)\) if it is differentiable (or through a differentiable surrogate if not).

6.4 Two Canonical Templates: Soft vs Hard Data Consistency

Soft consistency: gradient correction (MAP / posterior-inspired). At each sampling step, do a base update, then take a small step that reduces measurement residual.

Hard consistency: projection / proximal enforcement. When the feasible set is easy to enforce,

\[\mathcal{S}=\{x:\;A x=y\}\quad\text{or}\quad \mathcal{S}=\{x:\;\|Ax-y\|\le \epsilon\},\]one can apply a projection/prox step after the base update:

\[x \leftarrow x + \Delta t\,u_{\text{base}}(x,t), \qquad x \leftarrow \Pi_{\mathcal{S}}(x).\]This is the operator-splitting view: prior step (diffusion/flow) + data-consistency step (projection/prox).

7. Inference-time Image Editing

This chapter focuses on inference-time editing: we keep the pretrained diffusion model fixed (no fine-tuning), and we do not run an outer-loop optimization over prompts/latents (so methods like Null-text optimization / prompt embedding optimization are not included). Instead, we edit by intervening directly in the sampling dynamics—either by choosing a special initialization, or by modifying intermediate tensors (attention/features), or by enforcing region constraints.

Throughout, let the diffusion model be written in the standard noise-prediction form:

\[q(x_t\mid x_0)=\mathcal N\!\left(\sqrt{\bar\alpha_t},x_0,\;(1-\bar\alpha_t)\mathbf I\right),\qquad \epsilon_\theta(x_t,t,c)\approx \epsilon\]and the usual estimate of the clean image from a noisy latent: